Responsible Tech Careers #9: Navigating Layoffs and Career Transitions

Plus Bobby Zipp’s tips for applying to GovTech roles, mentor advice from Krti Tallam, & 30+ new roles from the Responsible Tech Job Board!

Hello!

Welcome to All Tech Is Human’s Responsible Tech Careers newsletter #9! This edition features individuals who have successfully navigated career transitions and are offering their tips and insights to the Responsible Tech community.

We’re featuring a webinar for those navigating layoffs from Big Tech, tips for those transitioning into GovTech roles at the state and local level, and great advice from one of our mentors, Krti Tallam (Principal Investigator & Senior Scientist at the AI Security Lab, UC Berkeley; Founder).

Check out the overview and dig into the details below!

🧠 In this newsletter, you’ll find:

Featured Webinar: Navigating Layoffs and Career Transitions in Responsible Tech

Featured Resource: Bobby Zipp’s tips for applying to GovTech roles

Advice from an ATIH Mentor: Krti Tallam - Principal Investigator & Senior Scientist, AI Security Lab, UC Berkeley; Founder

30+ new Responsible Tech roles!

🤝 All of these roles and hundreds more can be found on All Tech Is Human’s Responsible Tech Job Board. In addition to our job board, you will find that numerous Responsible Tech jobs are being shared and discussed every day through our large Slack community, which includes 12k people across 105 countries (Sign In | Apply).

✨ Another great way to grow your career in Responsible Tech is by attending our regular livestreams! Join us this Thursday at 1pm ET for Responsible Tech Author Series featuring Adele Zeynep Walton, author of Logging Off: The Human Cost of Our Digital World.

👑 Also consider subscribing to All Tech Is Human’s newsletter, focusing on issues and opportunities in the Responsible Tech ecosystem at-large and arriving in your inbox every other week (opposite weeks from this Careers Newsletter).

Now, onto the newsletter! 👇

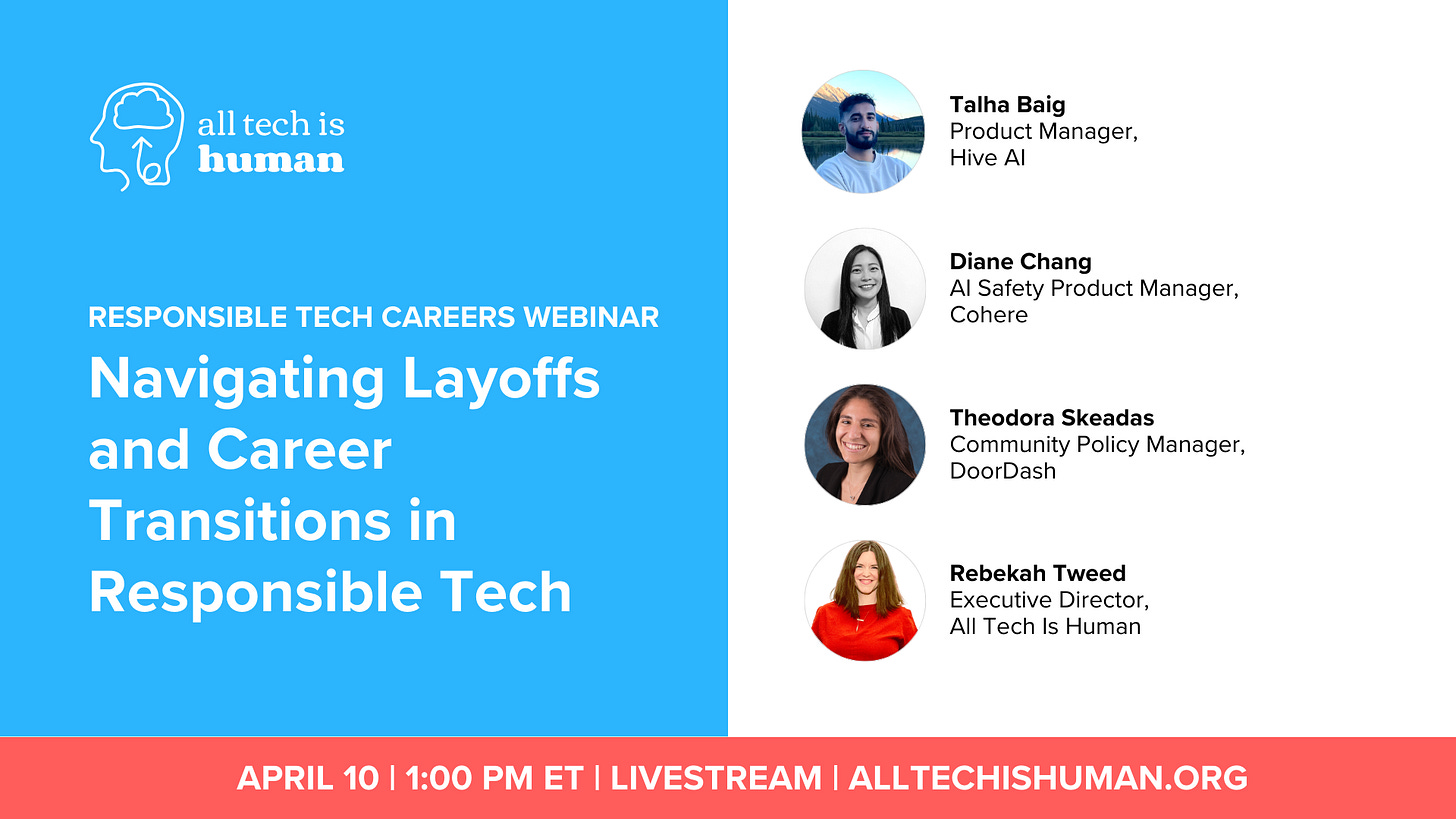

Featured Webinar:

Navigating Layoffs and Career Transitions in Responsible Tech

April 10th 1pm ET | Webinar

Join Talha Baig (Product Manager, Hive AI) and Diane Chang (AI Safety Product Manager, Cohere) in conversation with Theodora Skeadas (Community Policy Manager, DoorDash), and moderated by All Tech Is Human’s Rebekah Tweed. This discussion will focus on how responsible technologists can navigate layoffs and career transitions, as panelists discuss their experiences transitioning to startups and other opportunities after being laid off from roles within Big Tech. Tune in on April 10th at 1pm ET to join in the conversation, submit your questions, and connect with other Responsible Tech practitioners!

Featured Resource:

Robert “Bobby” Zipp, an All Tech Is Human mentor and longtime community member, is a Product Manager at the Manhattan District Attorney’s office. He recently took to LinkedIn to offer tips on how to apply to state and local government tech roles, especially in New York City.

Check out his post, which include:

❇️ An explanation of civil service titles

❇️ Contrasting competitive vs. non-competitive roles

❇️ Musings on the pace of the hiring process

❇️ Tips on how to network for GovTech roles in the public sector

Be sure to follow Bobby on LinkedIn, as he often posts helpful tips and insights!

🗣 Advice from an All Tech Is Human Mentor: Krti Tallam - Principal Investigator & Senior Scientist, AI Security Lab, UC Berkeley; Founder

From Krti Tallam - Principal Investigator & Senior Scientist, AI Security Lab, UC Berkeley; Founder

“My work sits at the intersection of AI security and technical governance. I focus on ensuring frontier AI systems are resilient, transparent and aligned with our future and with public interest by developing safety mechanisms against adversarial attacks, building frameworks for data provenance and trust and engaging in policy discussions on AI risk management and national security. I also contribute to federal AI governance efforts, working with stakeholders across high-stakes AI applications to safeguard against emerging AI risks. At the core, my work ensures that AI systems remain safe, accountable and equitable, shaping a future where AI innovation does not outpace governance and security.”

“My journey has been deeply interdisciplinary, spanning scientific machine learning, cybersecurity and AI for scientific applications. Starting in AI for climate science and sustainability, I felt it more pressing to have diverse and minority scholar representation in AI security by addressing real-world challenges in AI robustness, adversarial defenses and secure model deployment. Through leadership roles in AI governance, directing pre-seed, seed and Series A companies, and national security AI, I have been able to bridge technical innovation with policy impact, securing funding and guiding large-scale AI initiatives. I also work closely with global AI safety organizations, federal agencies and industry leaders to shape the future of responsible AI.”

“Advice: Understand AI governance and policy frameworks: tech alone doesn’t solve societal challenges. Build a strong technical foundation in a specific topic in AI. Stay adaptable.”

Responsible Tech Roles (listed from entry to senior roles)

🎉 FEATURED ROLES 🎉

Beeck Center for Social Impact + Innovation - Fellow, Benefits Data Interoperability

At the Beeck Center for Social Impact + Innovation at Georgetown University, our Innovation and Incubation (I+I) Fellows are leading experts who pilot new and emerging projects and initiatives. I+I Fellows are instrumental in driving the Center’s mission to address complex societal challenges through innovative solutions. The Benefits Data Interoperability Fellow will lead and execute a transformative 12-month research project to advance privacy-protected data sharing and matching, improving the accuracy, integrity, and efficiency of delivery of public benefits programs like SNAP, WIC, and Medicaid/CHIP and potentially unemployment insurance. Building on the Beeck Center’s work in benefits delivery, the Fellow will analyze existing research, data sets, policies, laws, and initiatives at federal and state levels to map the current landscape of public benefits data sharing and interoperability. They will produce original research to identify challenges, opportunities, pros, and cons of different ways that existing data sets can be integrated, combined, or shared through utilizing privacy-protected technology, to deliver benefits programs more accurately, effectively, and efficiently. The Fellow will document their work, culminating in a final publication with actionable recommendations at the conclusion of the position’s one-year term.

Consumer Reports - AI Observability Systems Consultant

AskCR, CR’s AI-powered advisor that answers your questions based on CR’s trusted research and product data, is evolving to deliver increasingly relevant and reliable information to consumers. We’re seeking a creative consultant to help build next-generation observability, analysis and post-precessing systems in AskCR. This consultant opportunity will focus on designing and implementing real-time guardrails, hallucination detection, and post-processing analysis pipelines to ensure AskCR delivers safe, reliable, and high-quality information to consumers.

Data & Society - Post-Doctoral Fellow, Trustworthy Infrastructures

Data & Society is seeking a post-doctoral research fellow for a two-year appointment for the Trustworthy Infrastructures program. The Trustworthy Infrastructures team researches community-driven responses to technology’s entrance into the most intimate parts of our working, material, personal, and public lives. We are interested in the sociopolitical conditions created by technological expansion, and how communities reassert their own power, agency, and knowledge in response. The fellow will be working primarily on the Nodes and Conduits project, which explores how post-industrial communities adapt to socioeconomic transformations driven by the tech industry. They will also have the opportunity to pursue their own research agenda. This role is shaped by an emergent body of work on the transformational role of the tech industry in driving the evolution of front-line communities most vulnerable to its expansion across the globe. As towns across Appalachia, the American Southwest, and large expanses across the Global Majority are re-organizing labor, land, democratic institutions, and social relationships to make room for AI’s infrastructure, this project aims to better understand the impacts on the lives of those at the center of this future. In addition to examining how communities cope with and adapt to these industrial transformations, this project aims to uncover the narratives of adaptation that contribute to deepening inequality in those sites, and the role of speculation in this evolution.

Entry Level

AstraZeneca - Enterprise AI Governance Operational Associate

The Enterprise AI Governance Operational Associate is an exciting role to support AstraZeneca’s work to ensure that AI is developed, deployed, and used in a safe, responsible, and ethical way. This is a unique opportunity to contribute to innovative and cutting-edge work, making a meaningful difference to our organisation. You will become a core part of AstraZeneca’s Enterprise AI Governance team, which is part of the Enterprise Data Office. The mission of Enterprise AI Governance is to ensure that AstraZeneca can maximise the value of AI while managing risks, complying with regulations, and protecting our business and patients. In this role, you will be responsible for ensuring that AstraZeneca’s AI governance processes are operating smoothly and efficiently, and that we are effectively responding to and prioritising business initiatives and requests. You will also implement changes that enable the team to effectively manage AI governance at scale. A typical week will include executing internal AI governance communications campaigns, presenting on AI governance at forums and town hall meetings, creating content for AI literacy and training sessions, and building dashboards to report metrics for AI risk and compliance tracking. To continue maturing our AI governance approach and to enable compliance by design, we require an exceptionally well-organised and process-oriented individual to become the Enterprise AI Governance Operational Associate Manager. The successful candidate will have a background in project management, team coordination, and data analysis.

Consumer Reports - Privacy Compliance Research Consultant

Permission Slip is a free app which allows users to exercise their data privacy rights by requesting that companies delete or stop selling their data. Companies that we send these data rights requests to each follow their own process for receiving and actioning the requests. As part of delivering these requests at scale, it’s imperative that we maintain our understanding of these company processes. We are looking for someone to audit the data rights requests processes of the ~400 companies supported in Permission Slip. This consulting project will have meaningful implications for protecting consumer privacy and may contribute to published work depending on the results of the audit.

ControlAI - Policy Advisor

We are expanding our UK policy team and seeking a talented individual to drive forward our parliamentary engagement on AI security and safety. The role is focused on building public and political support for addressing the extinction risk from superintelligence. Our priority is to expand our base of parliamentary support and translate the growing concern about AI into concrete policy measures. We are looking for a skilled policy professional who can navigate the technical complexities of AI policy and the practical realities of Westminster.

Mila - Institut québécois d'intelligence artificielle - AI Governance Advisor

Founded by Professor Yoshua Bengio of the University of Montreal, Mila brings together researchers specialized in artificial intelligence and more specifically in machine learning, deep learning and reinforcement learning. The AI governance advisor will play a key role in supporting organizations towards the adoption of responsible practices adapted to their respective realities. This person will contribute to the development of strategies, the dissemination of best practices and the implementation of initiatives that promote ethical and innovative AI governance. Its role will be to work closely with industry partners and other organizations, guiding them in the development and implementation of cutting-edge frameworks and practices. The person in office will have the opportunity to collaborate with a wide range of stakeholders, build and facilitate a community of practice and contribute to the development of training content to strengthen the understanding and application of responsible AI principles.

Teton County, Administration/ Office of Innovation and Engagement - Engagement and User Experience (UX) Design Specialist

SUMMARY: This position will strengthen Teton County’s ability to deliver services by leading engagement, research, and design across key initiatives. This position is responsible for leading and advising on County public participation and engagement initiatives by working with departments to research, design, deliver and evaluate strategies, and report on outcomes. This position plays a central role in designing, delivering, and analyzing participatory processes resulting in improved decision making.

Early Career

AI Hub, NYU McSilver Institute for Poverty Policy and Research - DevOps Engineer Consultant

The NYU McSilver Institute for Poverty Policy and Research is committed to creating new knowledge about the root causes of poverty, developing evidence-based interventions to address its consequences, and rapidly translating research findings into action through policy and best practices. The AI Hub at NYU McSilver is inviting proposals for a consulting agreement to engage the services of an experienced DevOps Engineer. The AI Hub has been established to investigate how artificial intelligence-driven systems can equitably address poverty and challenges relating to race and public health and provide thought leadership on the implications. At the AI Hub, we are developing software applications to enable more efficient, equitable, and accessible data analysis and insights for public health research and policy.

AI Hub, NYU McSilver Institute for Poverty Policy and Research - UI/UX Designer Consultant

The NYU McSilver Institute for Poverty Policy and Research is committed to creating new knowledge about the root causes of poverty, developing evidence-based interventions to address its consequences, and rapidly translating research findings into action through policy and best practices. The AI Hub is inviting proposals for a consulting agreement for a a creative and user-centered UI/UX Designer to support the design of a cutting-edge application for suicide prevention. The AI Hub at McSilver has been established to investigate how artificial intelligence-driven systems can equitably address poverty and challenges relating to race and public health and provide thought leadership on the implications.

Creative Commons - Copyright and AI Counsel

Creative Commons is made up of a group of 20 people, all passionate about open access and the potential it holds. The Copyright and AI Counsel serves as a subject matter expert and thought leader at Creative Commons (CC). The role includes internally-facing research, analysis, and strategy development, as well as public-facing communications and education.

Georgetown University - Software Engineer, Center for Security and Emerging Technology - Walsh School of Foreign Service

A policy research organization within Georgetown University’s Walsh School of Foreign Service, the Center for Security and Emerging Technology (CSET) provides decision-makers with data-driven analysis on the security implications of emerging technologies. CSET is currently focusing on the effects of progress in artificial intelligence (AI), advanced computing, and biotechnology. We seek to prepare a new generation of decision-makers to address the challenges and opportunities of emerging technologies. CSET's Emerging Technology Observatory is a free public platform for data and insight on critical emerging technology issues. Building on CSET's unique data and analytic capabilities, the ETO team builds analytic tools and datasets to help users across government, academia, nonprofits and the private sector understand and act on global emerging technology trends. Under the School of Foreign Service, CSET is hiring a Software Engineer. The Software Engineer will be a generalist who can flex between full-stack web development, web scraping, data preparation, and deploying applications on the Google Cloud Platform. The Software Engineer will primarily support the CSET Data Science Team’s Emerging Technology Observatory (ETO) effort, working with other software engineers, data scientists, and researchers on a small but passionate team focused on building high-quality public tools and datasets to inform critical decisions on emerging technology issues.

Novo Nordisk - Responsible AI Scientist

As an AI Scientist in the Responsible AI Team, you will help ensure that Novo Nordisk is at the forefront when it comes to good machine learning practices that set patient safety and privacy at the center. As a technical subject matter expert, you will help translate legal and regulatory requirements into technical solutions, including ensuring that there are well-established technical guidelines at Novo Nordisk for how to responsibly develop and use AI. You will have a pivotal role in ensuring that the company guidelines translate into operational actions on AI/ML solutions, as well as implementing responsible AI solutions and methods on both a use-case and toolbox level. Novo Nordisk has an established process for addressing legal requirements, and you will additionally take part in some of the focus groups and expert panels that are addressing regulatory requirements around AI/ML.

Mid-Career

Ada Lovelace Institute - Associate Director, Emerging Technology and Industry Practice

The Ada Lovelace Institute (Ada) is a hiring an Associate Director to lead our Emerging Technology & Industry Practice research domain and collectively set its agenda and workplan in our next strategy period (to 2029). This role will report into the Director and manage a team of 4-8 researchers working on projects aimed at: Evidencing the utility, efficacy and impacts of emerging technologies on impacted communities, primarily in the UK. Assessing the effectiveness and impacts of emerging AI governance and accountability practices like audits, impact assessments, and model evaluations. Encouraging their adoption by a wider set of public and private sector actors. Ensuring global proposals for AI and data regulation reflects our research into emerging accountability practices and technologies and encourage the adoption of these practices within other orgs.

aiEDU - Senior Programs Lead, West Coast

The AI Education Project (aiEDU) is a growing 501(c)(3) non-profit that creates equitable educational experiences to excite and empower learners everywhere with AI literacy. We educate students—especially those disproportionately impacted by artificial intelligence and automation—with the conceptual knowledge and skills they need to thrive as future workers, creators, consumers, and citizens. As the Senior Programs Lead, West Coast at The AI Education Project (aiEDU), you will advance AI literacy for students by developing and supporting partnerships with school districts and education agencies across multiple states. Your primary focus will be on fostering robust relationships with partners ensuring the effective delivery of our services. You will be the key liaison between aiEDU and our partner school districts. Your responsibilities include coordinating with internal teams and leadership as well as tailoring our offerings to meet the specific requirements of each district.

Aptiv - Project Manager, Generative AI

Aptiv is a global technology company that designs, develops, and manufactures software and hardware solutions to enable a safer, greener and more connected future. Our solutions help customers around the world to create software-designed platforms with advanced safety features, electrified architectures, and intelligent connectivity. At Aptiv, we are dedicated to developing innovative solutions that make transportation safer, greener, and more connected. As a Project Manager, you will play a crucial role in driving the development of innovative tools that enhance efficiency and productivity across Aptiv. This is not just a job; it's an opportunity to lead the charge in inventing cutting-edge tools that will redefine the ways of working and shape the future of automotive technology.

AstraZeneca - Enterprise AI Governance Policy Manager

The ‘Enterprise AI Governance Policy Manager’ is a high-profile role to drive forward AstraZeneca’s work to ensure that AI is developed, deployed and used in a safe, responsible and ethical way. This is a unique opportunity to lead innovative and cutting-edge work, which makes a meaningful difference to our organisation. You will become a core part of AstraZeneca’s Enterprise AI Governance team, which is part of the Enterprise Data Office. The mission of Enterprise AI Governance is to ensure that AstraZeneca can maximise the value of AI, whilst managing risks, complying with regulations and protecting our business and patients. In this role, you will lead work which enables AstraZeneca to prepare for, and comply with, AI regulations and standards. This will involve developing new policies and guidance, designing and delivering AI training and education, reviewing and advising on sensitive AI use cases, and tracking and reporting on compliance maturity levels across the company. You will be a subject matter in AI regulations, like EU AI Act, as well as regulations in other jurisdictions like China, the U.S. and beyond. Crucially, you will leverage your knowledge to ensure that our AI governance framework and processes are enabling effective AI regulatory compliance. Finally, you will engage externally to position AZ as a leader in this space and shape the regulatory environment.

AstraZeneca - Enterprise AI Governance Product Manager

The Enterprise AI Governance Product Manager is a high-profile role designed to drive forward AstraZeneca’s commitment to ensuring that AI is developed, deployed, and used in a safe, responsible, and ethical manner. This is a unique opportunity to lead innovative and cutting-edge work that makes a meaningful difference to our organization. You will become a core part of AstraZeneca’s Enterprise AI Governance team, which is part of the Enterprise Data Office. The mission of Enterprise AI Governance is to ensure that AstraZeneca can maximize the value of AI while managing risks, complying with regulations, and protecting our business and patients. In this role, you will drive efforts to enable AstraZeneca to manage AI governance at scale, utilizing world-class technology platforms and capabilities. We leverage technology to deliver and enable all aspects of AI governance, including cataloging AI use cases, applications, and models, performing AI risk assessments, tracking and reporting on AI usage, risk, and compliance, and testing AI performance. To continue maturing our AI governance approach and enable compliance by design, we require an exceptional, product-oriented individual to become the Enterprise AI Governance Policy Manager. The successful candidate will be responsible for ensuring AI governance platforms and tools are developed and rolled out effectively, driving user adoption, and maintaining alignment with our AI governance policy framework.

Capgemini - Agentic AI Business and Ecosystem Director

Capgemini's Applied Innovation Exchange (AIE) is seeking an Agentic AI Business and Ecosystem Director to accelerate AI-powered enterprise transformation, shape partnerships in the AI ecosystem, and help drive the adoption of multi-agent AI technologies across industries. This role is at the intersection of Agentic AI, business strategy, and ecosystem engagement, focusing on identifying high-impact AI opportunities and forging key industry partnerships. As a leader in this space, you will shape Agentic AI initiatives for enterprises, engage with the startup ecosystem, and drive strategic consulting efforts to ensure AI delivers measurable business outcomes.

Center for Strategic and International Studies - Deputy Director & Senior Fellow - Wadhwani AI Center

The Center for Strategic and International Studies is a non-profit, bipartisan public policy organization established in 1962 to provide strategic insights and practical policy solutions to decision makers concerned with global security and prosperity. The CSIS Wadhwani AI Center delves into crucial topics at the intersection of policy, AI, and other advanced technologies, focusing on three key themes Governance & Regulation, Geopolitics, and National Security. Issues explored include how governments can balance the benefits of increased technology adoption while mitigating potential risks; responsible strategies for the U.S. Department of Defense and Intelligence Community to harness advanced technology; practical and desirable regulations and governance structures concerning AI and other advanced technologies; and what actions the United States should take to advance U.S. and allied economic and security interests. The analyses aim to provide effective policy solutions that address the challenges posed by the rapid evolution of emerging technologies. seeks to answer vitally important questions about the future of Artificial Intelligence and its implications for the global economy and international security. The Wadhwani AI Center seeks a highly motivated Deputy Director and Senior Fellow to play a key role in shaping and advancing the program’s research agenda as it pertains to international artificial intelligence (AI) governance, regulation, and national security.

DataKind - Director, Research

DataKind is seeking a visionary Director, Research to lead our groundbreaking research initiatives in the higher education data science space. This is a unique opportunity to shape industry conversations and establish DataKind as a thought leader addressing critical system integration challenges in university data and technology systems. As our Director, Research, you'll conduct cutting-edge research that drives strategic insights, collaborate with our project team and external stakeholders, and develop thought-provoking content that influences the field. Your work will directly contribute to improving student graduation outcomes at scale across higher education institutions nationwide.

International Biosecurity and Biosafety Initiative for Science - Senior Bioinformatics Engineer – DNA Synthesis Screening

The Senior Bioinformatics Engineer will aid in the continued development and optimisation of the Common Mechanism, our open-source sequence screening software. In the past year, IBBIS released our software package, acquired our first users, validated our performance against >1,000,000 sequences as part of an international testing collaboration, and demonstrated resilience to pathogen sequences redesigned with AI. We are now looking to expand our team to ensure that the Common Mechanism can act as an effective global baseline for synthesis screening. You’ll join as a core member of our technical team, directly impacting how DNA synthesis is screened worldwide and helping to advance our mission of responsible, flourishing biotechnology. You’ll solve thorny sequence classification problems, handle vulnerability disclosures, and participate in international standards development.

OpenAI - National Security Risk Mitigation Lead, Global Affairs

We are looking for an experienced leader to drive OpenAI’s external collaborations and policy work on AI-related national security risks. Reporting to the Head of National Security Policy, you will also work closely with OpenAI’s Safety Systems organization to surface, study, and respond to risks posed by AI capabilities in areas such as cyber, CBRN, or the misuse of autonomous agents. You will closely collaborate with OpenAI’s pathfinding technical teams to understand and communicate rapid AI advancements and associated safety and preparedness implications. You will be responsible for defining and executing on our strategy for engaging with risk-relevant national security stakeholders to address these challenges at scale, including through public-private partnerships and joint evaluations of AI capabilities.

Openchip - Director, AI Safety

The Director of AI Safety at Openchip will be responsible for ensuring that our AI systems are secure, ethical, and aligned with industry safety standards. This role demands a vertically integrated approach, addressing AI security from high-level applications down to low-level implementation. The ideal candidate will lead the development of safety mechanisms, guardrails, and validation frameworks to build trusted AI systems.

Oxford Internet Institute - Departmental Research Lecturer in AI, Government & Policy

We are seeking to appoint a Departmental Research Lecturer in AI, Government & Policy to conduct research and teaching and also play an integral part in the activities of the department. This is a full-time role, starting 1st July 2025 (or as soon as possible thereafter) for a fixed term period until 27 October 2026. You will be based in Oxford as your normal place of work. The successful candidate will be responsible for contributing to an excellent programme of research supporting the responsible and effective use of AI and other new information technologies in government decision-making or public service delivery, and for teaching in the graduate programmes of the Oxford Internet Institute. We are particularly interested in candidates conducting research on government digitalisation and innovation, and/or uses of AI for policy design and service delivery.

PepsiCo - Responsible AI Enablement Manager

As a member of the Responsible AI Office, the Manager - Responsible AI Enablement is responsible for ensuring the Responsible AI framework effectively manages the risk of AI across PepsiCo. The role creates and maintains the Responsible AI framework, policies and standards, and drives the enabling culture for the framework to be realized. The successful candidate will have a deep understanding of the emerging area of AI Governance, a broad understanding of the PepsiCo business, and have skills and experience in driving outcomes across complex projects and through excellent stakeholder management skills.

RAND - Research Lead - Securing Frontier AI

RAND's Meselson Center, part of the Global and Emerging Risks (GER) division, is seeking an accomplished technical leader to drive our ambitious frontier AI security research agenda. As Research Lead - Securing Frontier AI, you'll direct a comprehensive research portfolio focused on ensuring that the world's most important AI systems are appropriately secured and addressing critical challenges at the intersection of AI, information security, and national security. You will be responsible for managing significant research budgets and personnel, overseeing complex technical research and policy analysis projects, and leading multidisciplinary teams of policy researchers, engineers, and scientists. Your work will shape recommendations for the White House, regulatory agencies, the intelligence community, other national governments, and industry leaders. Your team will communicate findings to both technical and non-technical audiences through quick-turnaround policy briefs and detailed technical analyses. A recent example of one of our research products is the Securing AI Model Weights report, which explored protecting frontier AI model weights from theft and misuse.

Salesforce - Senior or Principal Data Scientist - Technical AI Ethicist

Salesforce’s Office of Ethical and Humane Use is seeking an experienced responsible AI data scientist with an adversarial approach and experience conducting ethical red teaming to contribute to our ethical red teaming practice. In this role, you will help us gain a deep understanding of how our models and products may be leveraged by malign actors or through unanticipated use to cause harm. In addition to adversarial testing, you will analyze current safety trends, and develop solutions to detect and mitigate risk, while working cross-functionally with security, engineering, data science, and AI Research teams. You will bring technical depth to the assessment of AI products, models, and applications, in order to identify the best technical mitigations to identified risks. The ideal candidate will have technical experience in generative as well as predictive artificial intelligence and in responsible / ethical AI.

Senior/Executive Level

Gartner - Director Analyst, Privacy Program Leadership and Technology

As a Director Analyst aligned with the Cybersecurity and Privacy Program Management team, you will deliver advice and thought leadership in privacy management through published research, conversations with clients (Inquiry), teleconferences, and client meetings. You will bring Gartner's research and tools to life and ask insightful questions to diagnose the causes of client challenges and recommend the best course of action. The role requires a good understanding of a privacy management program and hands on experience of aspects such as privacy impact assessments, privacy audits, privacy policies, the role and position of a privacy officer.

GoFundMe - Director, Legal - Privacy, Cybersecurity and AI Governance

GoFundMe is seeking a Director Legal, Privacy, Cybersecurity and AI Governance to manage and scale our privacy and AI governance programs and support our security team. This role will oversee the continued development of AI governance, ensure compliance with global privacy regulations, support product teams on privacy matters, support the security team on all incident response matters and provide strategic guidance on privacy and AI-related contractual issues. The ideal candidate will have deep expertise in privacy and cybersecurity law, AI governance, and data protection, as well as strong leadership skills to mentor and manage a team.

Meta - Director & Associate General Counsel, Data Protection (AI)

Meta is seeking an experienced and motivated lawyer to lead our data protection legal team’s work on a range of issues related to Artificial Intelligence (AI) across Europe and the United Kingdom, based in our European headquarters in Dublin (preferred) or in London. This role includes opportunities to advise Meta’s product and business teams on emerging requirements around artificial intelligence and automated decision-making working on cutting-edge issues in a fast-paced, fun environment. The role will be responsible for managing a team of expert lawyers, leading strategic legal counseling to internal legal, product, operations and business teams through all phases of product development as well as engaging with European data protection authorities, where required. An ideal candidate for the role will have a solid understanding of data protection, privacy and AI ethics principles and a strong grasp of online technologies. The candidate must be a clear communicator, be able to collaborate across functions, including Meta’s global legal counselling and product teams and have strong managerial experience. You will join a collegial and growing team of privacy lawyers who identify, evaluate and address privacy risk in light of emerging laws, compliance obligations and policies.

Surveillance Technology Oversight Project - Executive Director

The Surveillance Technology Oversight Project (“S.T.O.P.”) is looking for an experienced public interest leader to usher in a new era for our organization as our next Executive Director. S.T.O.P. fights state and local government surveillance across New York and the country, strengthening privacy and civil rights, and creating a model for challenging police surveillance across the globe. Our work highlights the discriminatory impact of surveillance on communities of color (and particularly the unique trauma of anti-Black policing), Muslim Americans, immigrants, and the LGBTQ+ community. The Executive Director will lead S.T.O.P.’s growing cohort of staff, interns, volunteers, researchers, and pro bono attorneys, helping expand the six-year-old civil rights organization with vision and diplomacy. The Executive Director will be responsible for overseeing every area of the organization’s work, including development, communication, litigation, lobbying, research, coalition building, and more. We are seeking a master storyteller who can demystify some of the newest, most alarming technologies in existence, staying ahead of the Orwellian curve.

🗒️You can find these roles, and more being updated daily on our Responsible Tech Job Board along with being shared in our Slack community.

💪Let’s co-create a better tech future

Our projects & links | Our mission | Our network | Email us

Subscribe to All Tech Is Human’s main newsletter for Responsible Tech updates!

🦜Looking to chat with others in Responsible Tech after reading our newsletter?

Join the conversations happening on All Tech Is Human’s Slack (sign in | apply).

We’re building the world’s largest multistakeholder, multidisciplinary network in Responsible Tech. This powerful network allows us to tackle the world's thorniest tech & society issues while moving at the speed of tech.

Reach out to All Tech Is Human’s Executive Director, Rebekah Tweed, at Rebekah@AllTechIsHuman.org if you are hiring and would like to work with All Tech Is Human to find candidates who are passionate about responsible technology or if you’d like to inquire about featuring a role in this newsletter!